As AI Agents evolve from simple tool-callers to sophisticated long-term collaborators, memory has become the linchpin of their potential. Yet, existing long-term memory solutions face a fundamental paradox: agents often possess a detailed interaction diary but lack a true knowledge reserve.

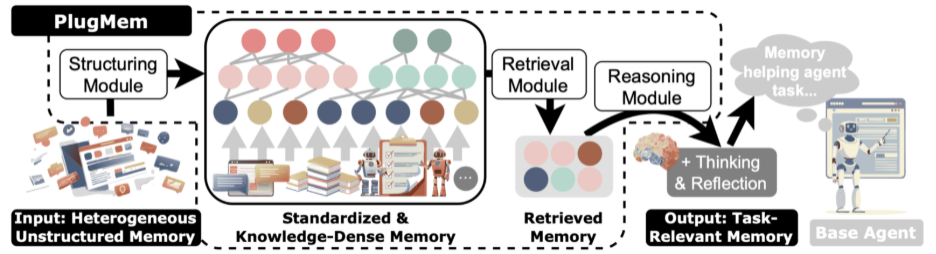

Enter PlugMem, a recent proposal from a joint team at UIUC, Tsinghua University, and Microsoft Research. The paper positions PlugMem as a task-agnostic, pluggable memory module whose core contribution is a full knowledge abstraction framework. Instead of merely remembering more, it transforms low-density raw logs into high-value, cross-task decision assets through dedicated structuring, retrieval, and reasoning sub-modules.

Experimental results show that PlugMem not only improves an agent's success rate in complex environments but also reduces context costs during inference. That is the real shift here: agent memory stops behaving like a compressed logbook and starts behaving like reusable experience.

Beyond the Condensed Logbook: The Problem with LLM Agent Memory

When designing long-term memory for LLM agents, a practical challenge quickly becomes apparent: raw interaction trajectories are simply too long. Feeding them all into the context window is inefficient and often overwhelms the model. Storing them in a memory system, on the other hand, can create a noisy and unmanageable repository.

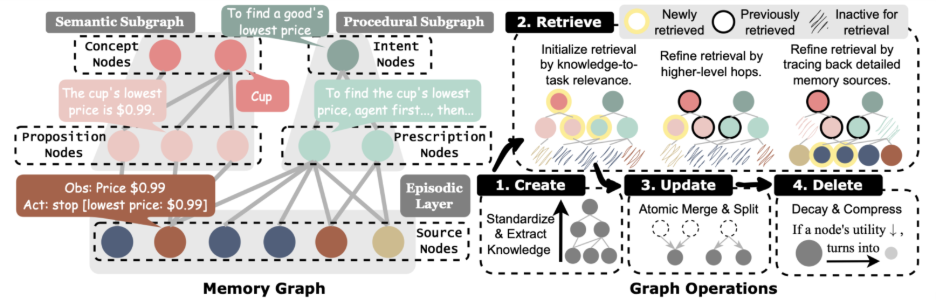

Consequently, the community began experimenting with compressed memory solutions, from dialogue summaries and vector retrieval to knowledge graphs with relational structure.

PlugMem reframes agent memory as reusable knowledge instead of a shorter activity log.

These methods help with the context length problem, but they often compress only the form of the information, not its essence. In other words, they turn a long activity log into a shorter one.

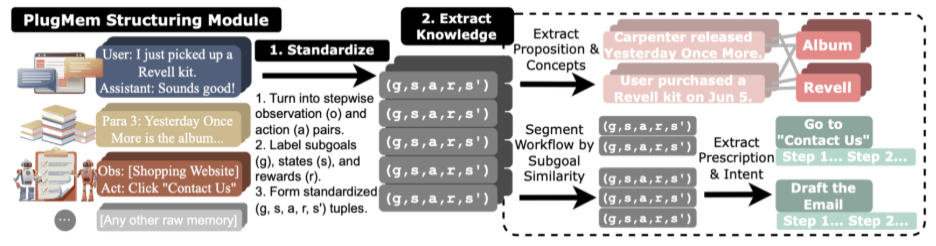

For example, imagine a user asks an agent to order an apple. The most valuable memory is not the raw log of a previous task, but the distilled knowledge that to order an item, the agent should open website A, use the search bar, choose a product, and add it to the cart. That insight might come from a previous task where the agent ordered a cup:

User: Please order a cup for me. Agent: To order a cup, I'll first try website A... The user isn't registered on website A... I'll try website B... Success!

If this interaction is merely summarized, it might become: "User asked me to order a cup. I opened website A, then website B." A memory structured as knowledge, however, would guide the agent to try website B first when ordering the apple. A memory derived from simple compression would only help the agent recall past actions, not the logic of why website A should be skipped.

PlugMem's Philosophy: From Remembering More to Useful Knowledge

PlugMem starts by reframing the problem itself: what if an agent's memory did not just chronicle the past, but actively distilled useful experience from it?

That requires the system to process scattered interaction logs and transform them into more abstract, stable units of knowledge.

To achieve this, the authors draw on the classic cognitive-science split between different kinds of knowledge and organize long-term memory into two higher-density forms:

- Propositional knowledge: Facts about the world, entities, or users. For example: "The user is allergic to dairy products," or "Certain shopping sites usually offer price sorting."

- Prescriptive knowledge: Action guidance for a specific situation. For example: "To inspect a product's price range, sort low-to-high to find the minimum, then sort high-to-low to find the maximum."

Once this knowledge is consolidated, the agent no longer needs to sift through lengthy histories in a new task environment. It can invoke higher-level insights directly. Memory stops being a system burden and becomes a reusable decision asset.

How PlugMem Works: A Pluggable Memory Module

In implementation terms, PlugMem is pragmatic. It is not a brand new agent framework. It is a memory plugin that can be embedded into existing agent systems without rewriting the whole stack.

That design choice cleanly decouples memory management from task execution and turns memory into an infrastructure layer.

The PlugMem pipeline separates memory structuring, retrieval, and reasoning so the agent can reuse abstract knowledge across tasks.

The module consists of three sub-modules that work together:

- Structuring module: Processes raw trajectories and breaks them into semantic memory, procedural memory, and episodic memory, then organizes them into a knowledge graph.

- Retrieval module: Searches that graph for the most useful memory based on the current task and the kind of knowledge the agent needs.

- Reasoning module: Filters and compresses the retrieved knowledge so it better matches the current task context and remains efficient to use.

Ablation studies make the division of labor clear. The structuring module improves memory quality. The retrieval module improves relevance. The reasoning module improves efficiency.

PlugMem organizes semantic, procedural, and episodic memory into a graph that is easier to retrieve and reuse.

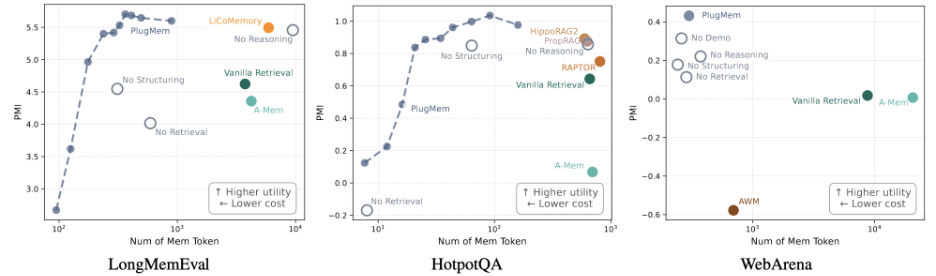

Experimental Validation: Better Performance with Fewer Memory Tokens

To validate the approach, the authors deployed PlugMem across three distinct agent scenarios:

- LongMemEval: factual consistency in long-term conversations.

- HotpotQA: multi-hop knowledge reasoning.

- WebArena: decision-making in a complex web interaction environment.

Together, these tasks cover the main memory demands of modern agents: recalling facts, combining knowledge, and taking actions.

Across evaluation settings, PlugMem improves memory usefulness before longer context turns into noise.

The results show that even without task-specific tuning, PlugMem delivers consistent gains across all three benchmarks. Just as important, the number of memory tokens consumed during inference drops substantially.

That is why PlugMem matters. Its advantage is not just remembering more. It is packing more useful decision value into each unit of memory. When memory is organized as knowledge, the agent often needs only a small amount of key information to solve tasks that would otherwise require a large amount of historical context.

The paper also introduces an important evaluation idea: information density. In simple terms, it asks how much decision-making value each memory token contributes. On this metric, PlugMem produces higher information return at lower context cost across all three tasks.

By plotting agent performance against memory length, the authors also recover a pattern that feels intuitive in practice:

- With very little memory, PlugMem provides limited advantage.

- In the early stage, each extra unit of memory creates a strong performance gain.

- As memory grows, performance begins to plateau and marginal return shrinks.

- Beyond a certain threshold, more memory adds noise and can reduce performance.

Final Thoughts: What PlugMem Changes for AI Agent Memory

The value of PlugMem is not that it invents yet another storage format or retrieval algorithm. Its real contribution is changing the question from "How do we store more and query faster?" to "What is actually worth remembering?"

That distinction matters. For a long time, agent memory discussions focused on retrieving a few relevant lines from massive logs. PlugMem argues that memory quality should be judged by how much it helps at the decision point, not by how much history it stores.

PlugMem is therefore best understood as both a technical design and a conceptual shift. By applying cognitive-science ideas like semantic and procedural memory to AI agents, it shows how machines can learn from specific events and extract reusable experience. If you are comparing memory designs for production systems, it is worth reading PlugMem alongside our guide to LLM agent architecture and persistent-memory systems like MemOS.